How to Deploy FLAN-T5 to Production on Serverless GPUs

January 24, 2023Deprecated: This blog article is deprecated. We strive to rapidly improve our product and some of the information contained in this post may no longer be accurate or applicable. For the most current instructions on deploying a model like FLAN-T5 to Banana, please check our updated documentation.

In this tutorial, we're going to demonstrate how you can deploy FLAN-T5 to production. The content is beginner friendly, Banana's deployment framework gives you the "rails" to easily run ML models like FLAN-T5 on serverless GPUs in production. We offer a tutorial video and a written deployment guide for to choose how you follow along.

For reference, we're using this FLAN-T5 base model from HuggingFace in this demonstration. Time to dive in!

What is FLAN-T5?

FLAN-T5 is an open source text generation model developed by Google AI. One of the unique features of FLAN-T5 that has been helping it gain popularity in the ML community is its ability to reason and explain answers that it provides. Instead of just spitting out an answer to a question, it can provide details around how it arrived at this answer. There are 5 versions of FLAN-T5 that have been released, in this tutorial we focus on the base model.

Some interesting use cases of FLAN-T5 are its translation capabilities (over 60 languages), ability to answer historical and general questions, and summarization techniques.

Video: FLAN-T5 Deployment Tutorial

Video Notes & Resources:

We mentioned a few resources and links in the tutorial, here they are.

- Creating a Banana account (click Sign Up).

- Access to the FLAN-T5 template repository.

In the tutorial we used a virtual environment on our machine to run our demo model. If you are wanting to create your own virtual environment use these commands (Mac):

- create virtual env:

python3 -m venv venv - start virtual env:

source venv/bin/activate - packages to install:

pip install banana_dev

Guide: How to Deploy FLAN-T5 on Serverless GPUs

1. Fork the FLAN-T5 Banana-compatible Repo

The first step is to fork this Banana-compatible FLAN-T5 repo to be your own private repository. This is the repository that you will use to deploy FLAN-T5 to Banana. Since the repository is already set up to run FLAN-T5 on Banana's serverless framework...this tutorial will be quite easy!

2. Create Banana Account and Deploy FLAN-T5

Before you deploy your FLAN-T5 repo to Banana, we highly encourage you to test your code before deploying to production. We suggest using Brev (follow this tutorial) to test.

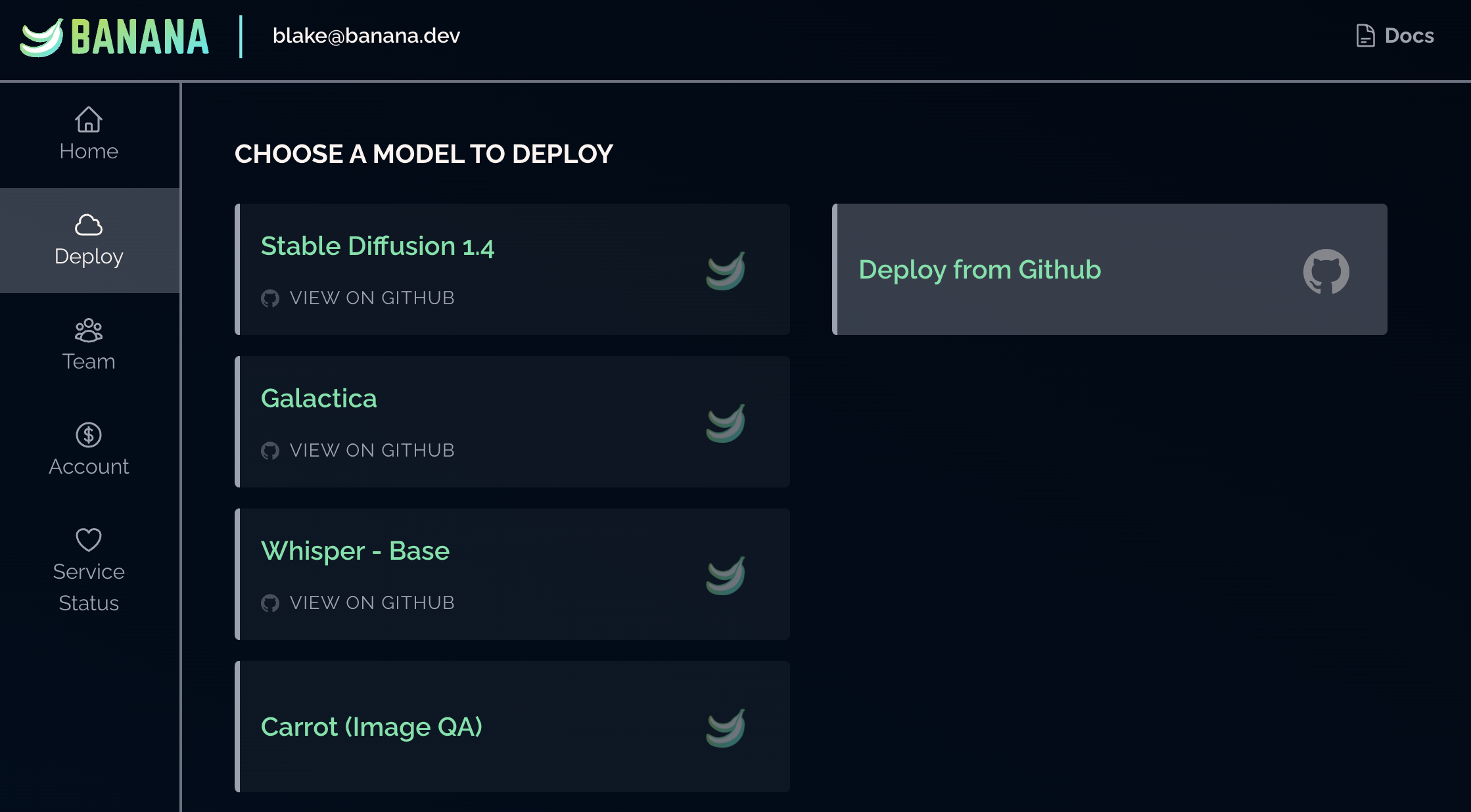

Once you have tested your repo, login to your Banana Dashboard and navigate to the "Deploy" tab.

Select "Deploy from GitHub Repo", and choose your FLAN-T5 repository. Click "Deploy" and the model will start to build. The build process can take up to 1 hour so please be patient.

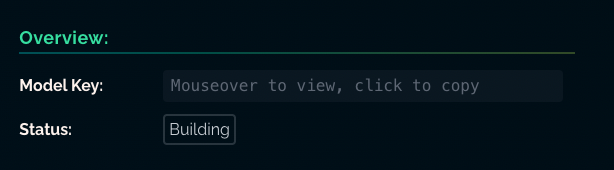

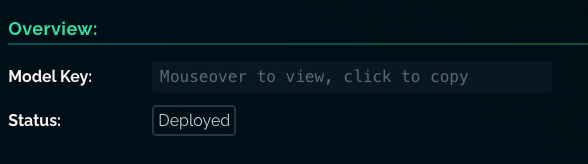

You'll see the Model Status change from "Building" to "Deployed" when it's ready to be called.

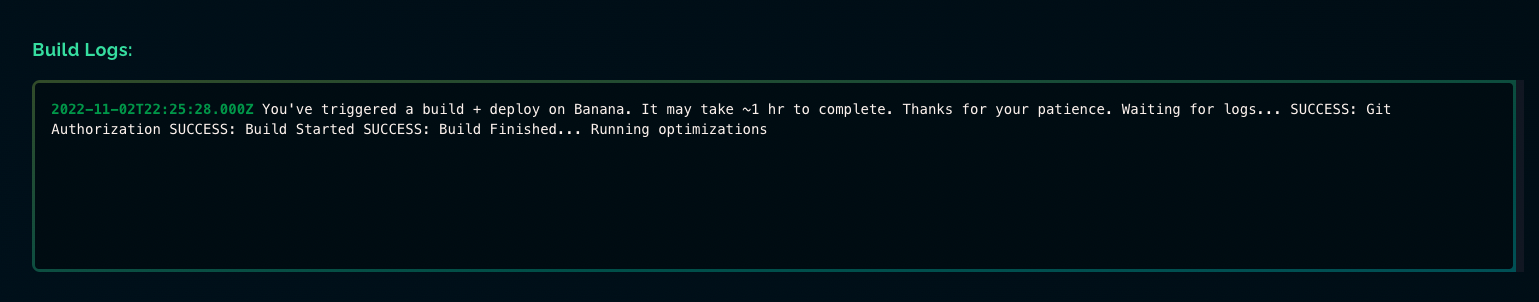

You can also monitor the status of your build in the Model Logs tab.

4. Call your FLAN-T5 Model

After your model has built, it's ready to run in production! Choose the programming language you plan to use (Python, Node, Go) and then jump over to the Banana SDK. Within the SDK you will see example code snippets of how you can call your FLAN-T5 model.

That's it! Congratulations on running FLAN-T5 on serverless GPUs. You are officially deployed in production!

Wrap Up

Reach out to us if you have any questions or want to talk about FLAN-T5. We're around on our Discord or by tweeting us on Twitter. What other machine learning models would you like to see a deployment tutorial for? Let us know!