How to Deploy and Run Dolly 2.0

April 24, 2023Deprecated: This blog article is deprecated. We strive to rapidly improve our product and some of the information contained in this post may no longer be accurate or applicable. For the most current instructions on deploying a model like Dolly 2.0 to Banana, please check our updated documentation.

Today we are going to take you on a journey to show you how to deploy Dolly 2.0 on

serverless GPUs. For this tutorial, we'll deploy Dolly as a chatbot within your own terminal. Lucky for you, this journey is quick and easy!

You do not need to be an AI expert to follow along. Some basic Python coding experience is helpful, but you could get away with copy and pasting our code and being a good instruction follower.

This tutorial is so simple to follow because we are using a Community Template. Templates are user-submitted model repositories that are optimized and setup to run on Banana's serverless GPU infrastructure. All you have to do is click deploy and in a few minutes your model is ready to use on Banana. Pretty sweet! Here is the Dolly 2.0 model template we'll be using in this tutorial.

What is Dolly 2.0?

Dolly 2.0 is the world's first open source, instruction-trained large language model (LLM) that is licensed for research and commercial use. Dolly 2.0 was developed by Databricks and was trained on a human-generated instruction dataset. All aspects of the model have been open sourced which is quite exciting (training code, dataset, model weights).

Dolly 2.0 Deployment Tutorial

Step 1: Deploy the Dolly 2.0 template

Here is the community template for Dolly 2.0 that we are using in this tutorial. Sign up for Banana (if you haven't already), and deploy this template. It takes one click and a few minutes to deploy. Pretty darn simple.

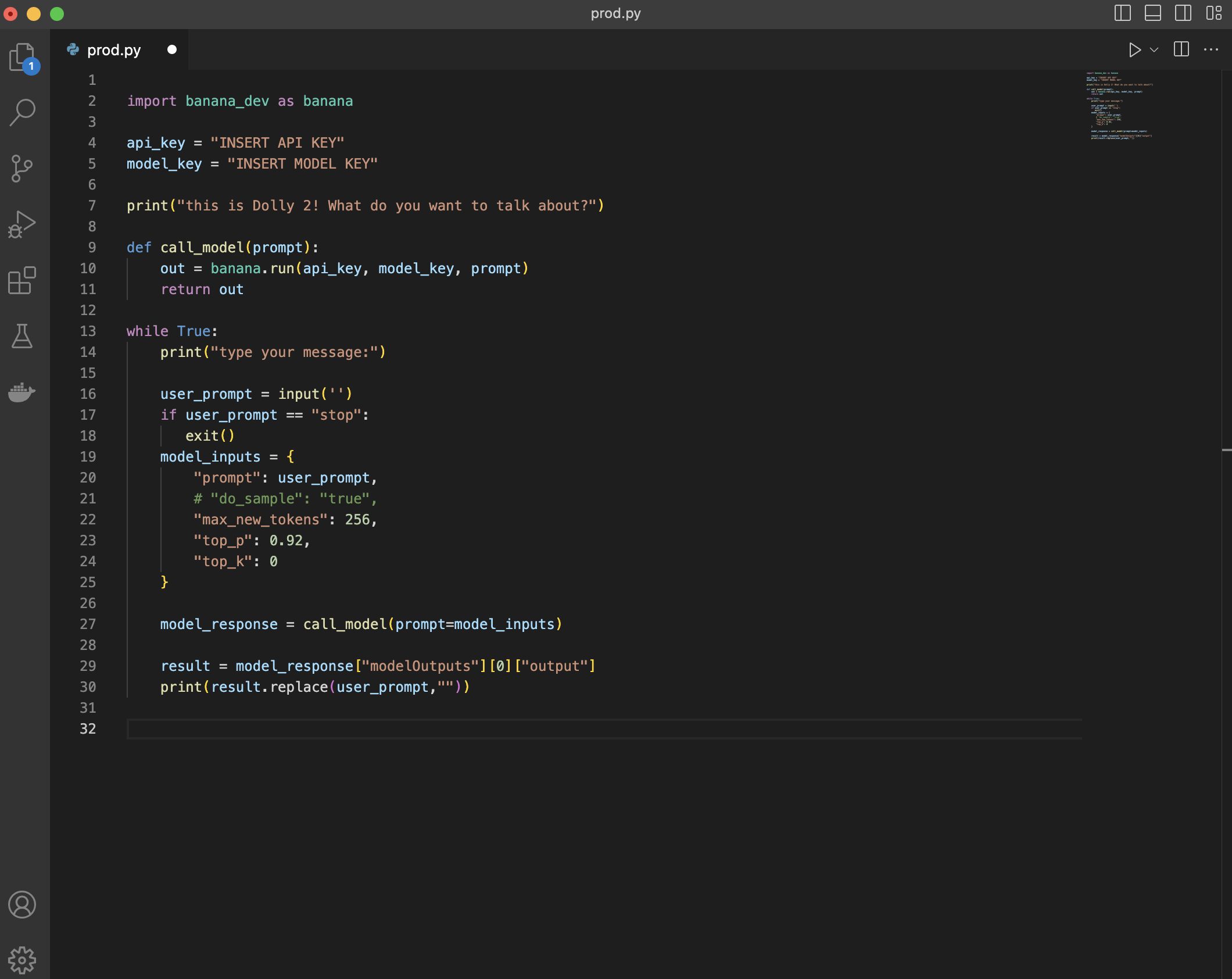

Step 2: Write your test file for Dolly

Once your model is deployed, open up a code editor and write the code for your test file. AKA - copy and past the Python snippet below (just add in your Model Key and API Key). Now remember, for this demo we are building chatbot-like functionality within a terminal with Dolly. That means this code snippet has a few more components to it than you would need if you simply wanted to run your Dolly 2.0 model with a basic test call.

``

import banana_dev as banana

api_key = "insert API key"

model_key = "insert model key"

``

print("this is Dolly 2! What do you want to talk about?")

``

def call_model(prompt):

out = banana.run(api_key, model_key, prompt)

return out

``

while True:

print("type your message:")

``

user_prompt = input('')

if user_prompt == "stop":

exit()

model_inputs = {

"prompt": user_prompt,

# "do_sample": "true",

"max_new_tokens": 256,

"top_p": 0.92,

"top_k": 0

}

``

model_response = call_model(prompt=model_inputs)

result = model_response["modelOutputs"][0]["output"]

print(result.replace(user_prompt,""))

``

The main components that we added in this code snippet to give it the chatbot functionality are: a while loop to continuously ask for and take in user input for the chatbot prompts, the ability to end the while loop when you are ready to stop talking with your chatbot, a function that calls the model, and a few tweaks to the output to make it look nice and tidy to feel like a chatbot is speaking with you.

Make sure your formatting matches the screenshot here:

Step 3: Run Dolly 2.0! (on Serverless GPUs)

We've made it to the end of the demo! All we need to do is run your Dolly model in production. To do this, make sure you:

- Save your Python file

- Open a terminal and start a virtual environment

pip install banana_dev- run your file (

python3 <filename>.py)

Hint - to start a virtual environment, use these consecutive commands:

python3 -m venv venv

source venv/bin/activate

``

Congrats! You have successfully created a Dolly 2.0 chatbot in your terminal that runs on serverless GPUs. How easy was that?! Thank you for following along.

Wrap Up

How did we do? Were you able to deploy Dolly 2.0? Drop us a message on our Discord or by tweeting us on Twitter. What other machine learning models would you like to see a deployment tutorial for? Let us know!