Changelog #039

November 10, 2023Follow along on Twitter for detailed updates and improvements made to Banana.

Bananalytics

Analytics are incredibly helpful for understanding the health of your project and the experience your end-users are seeing.

You can now view request volume, errors, and latency metrics for each project:

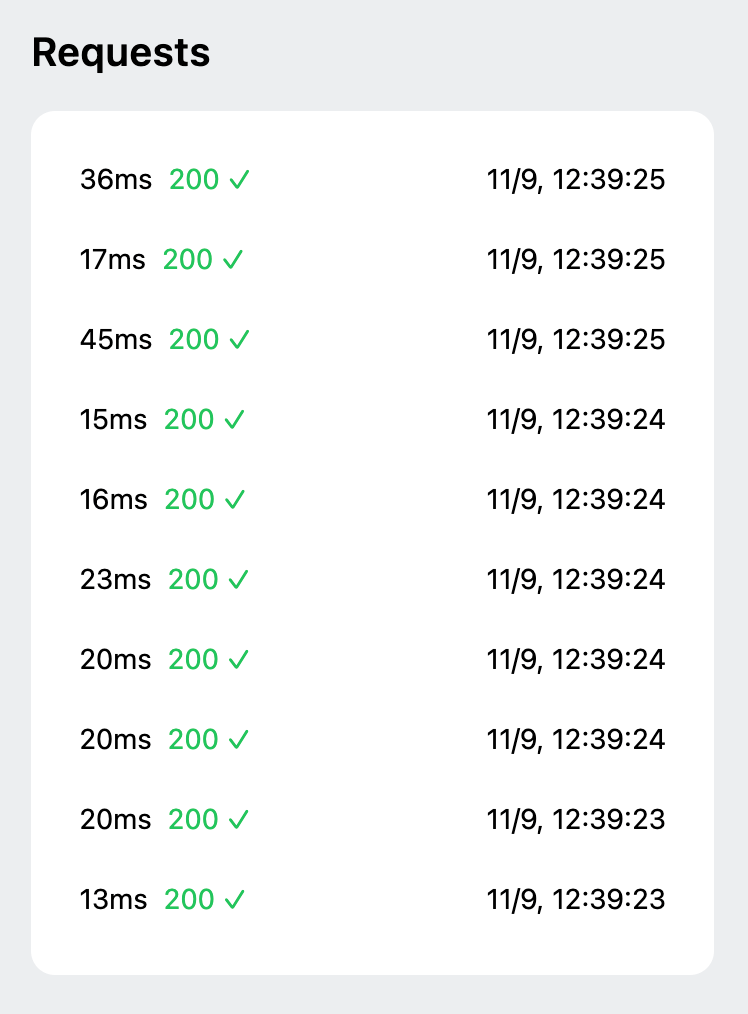

Request History

You can now view queued and recently completed requests:

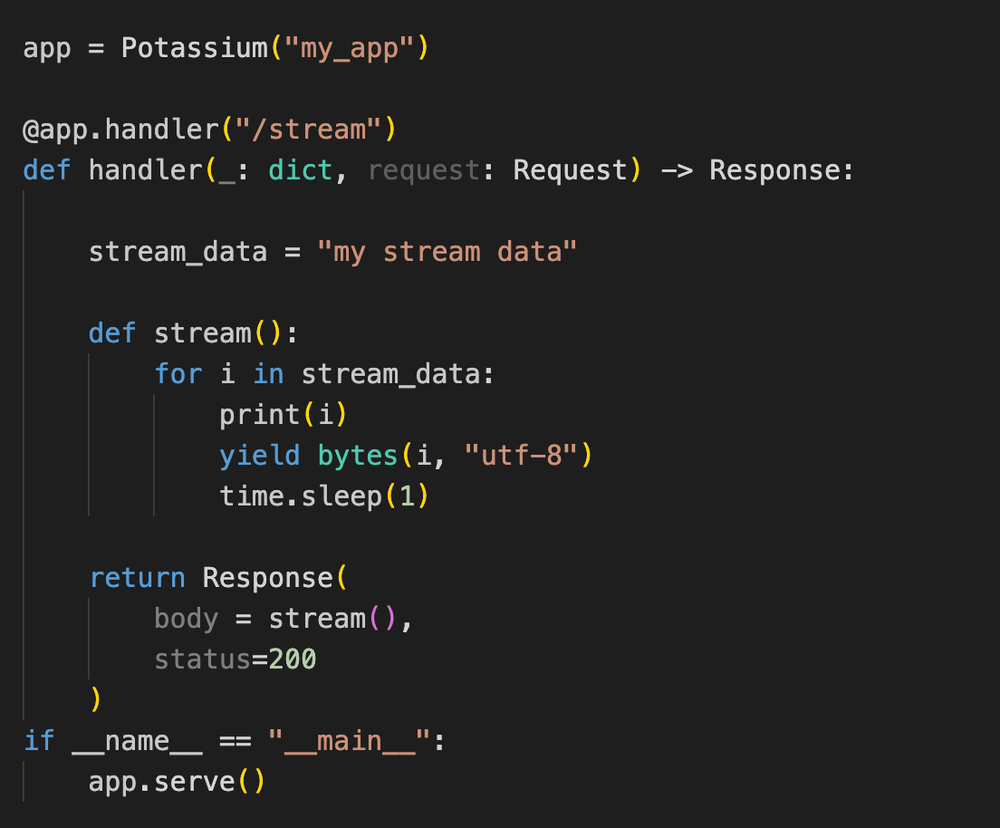

Response Streaming

Starting with Potassium version 0.4.0 you may now return a bytes body in the Response, which unlocks:

✨ Response Streaming ✨

This is a huge unlock for latency-critical applications, where users are on the other end waiting for a response.

In these cases, the critical churn point for end users is when they're watching a progress spinner spin. After a few seconds, their attention drifts. After a few more, they may bounce.

Response streaming allows you to print tokens or paint intermediate images in front of your users as soon as they become available, giving your users a feeling of progress.

On Banana, you can now achieve this in our Potassium framework. Just create a generator function that yields bytes, and return that generator function to the Response body to start sending data back to the client the moment it becomes available.

This will stream the results back to the caller in chunks.

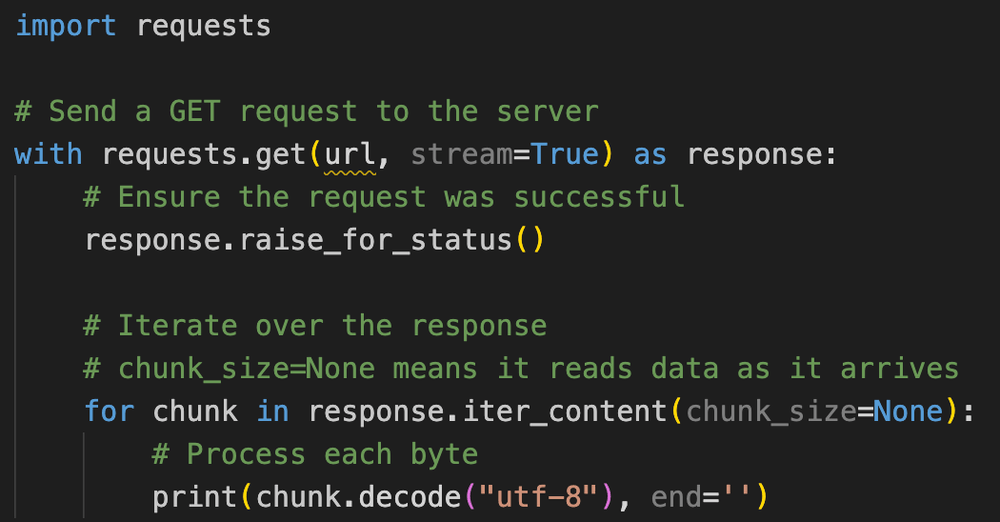

This is a common pattern, so most http clients support receiving streamed data. Using Python requests as an example, your client may look like:

Percent Utilization Autoscaling

This is an advanced autoscaling feature, for users with high volume traffic who want to avoid cold boot as much as possible.

Percent Utilization Autoscaling ensures that you have extra replicas warm so that any sudden traffic spikes are handled by a warm instance.

Percent Util = Active Replicas / Total Replicas

Therefore our autoscaler will scale your total replicas using this formula:

Total Replicas = Active Replicas / Percent Util

Note that Min Replicas, Max Replicas, and Idle Timeout take logical priority.

This setting is available to you in the project settings.

That's all!

Thanks everyone for reading up on our week. Until the next one!

If you have any feature suggestions, improvements, or bug reports, send us a message or let us know in #support or #feature-requests on Discord.