5 Signs You Need Serverless GPUs

June 01, 2022One of the benefits of being the provider of serverless GPU inference hosting is that we get to work with founders and teams across machine learning and hear their pain points.

From these hundreds of conversations, we identified the most common signs a machine learning team will encounter as they recognize the need for serverless GPUs. Hopefully you find these signals helpful and are able to move to serverless GPUs before any of these signs become a roadblock for your team and product.

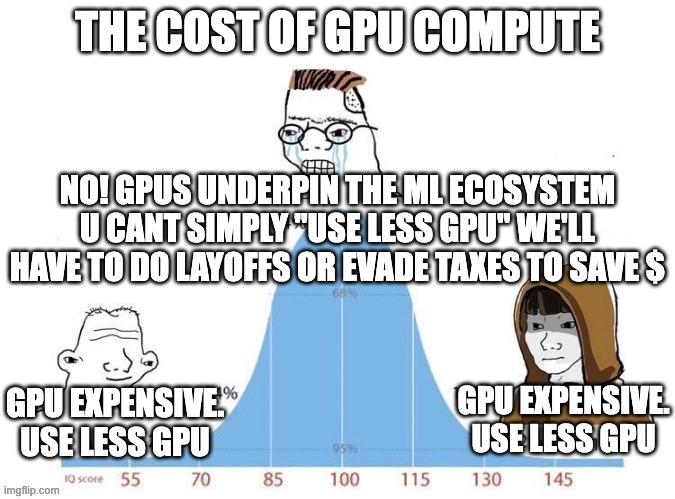

1. Price - cost of "always-on" GPUs is too expensive

Nearly every customer that implements serverless GPUs will experience meaningful cost savings, but there are two common tracks of customers that find price as a substantial pain point when they come to Banana to setup serverless.

The first, is the indie hacker/hobbyist/student that can't afford the price point of an "always-on" GPU and don't have the traffic yet where they are using 100% compute capacity of a GPU. They tend to be looking for a solution that costs them less on a monthly basis and that they only pay for the GPU compute that they actually use (which is precisely what serverless GPUs are).

The second common customer with this pain point is an ML team with a product that has reached a level of scale and usage that is variable but much beyond needing one "always-on" GPU. Typically they come to Banana with multiple "always-on" GPUs running and their inference hosting costs are growing rapidly and sometimes at an unsustainable pace. In this instance, serverless GPUs will benefit them because it decreases their hosting costs and improves their unit economics as they scale.

Going serverless means you only pay for the GPU resources you use (utilization time) rather than “always-on” GPU costs. In other words, if your product only needs the compute of 1/4 of a GPU you are only paying for 1/4 of a GPU. When your product has no usage at moments, you don’t pay for those times either. Contrast this to an “always-on” GPU, you need to pay for the entire GPU to be running 24/7, regardless of what percentage of the GPU compute power you actually use.

2. Your product has spiky or variable usage

This point piggybacks off the previous, but if you are paying for "always-on" GPUs and your product usage is variable than it's very likely you are wasting money keeping GPUs active when you don't need the compute.

A useful analogy: Do you leave your car running during the night while you are sleeping or during the day when you are at your desk working? For most people, the answer is no. Why would you keep the car running and pay for that fuel usage when you don't need it?

The same principle applies to GPU compute. Don't keep GPUs running during downtime when you don't have the customer traffic that justifies the compute of a GPU.

3. Scaling fast and need reliable infrastructure to handle growth

When your product is growing rapidly it's a nightmare trying to stay ahead of customer demand and make sure your GPU quota is keeping up with your usage. Inevitably there will be moments where you don't have the GPU quota available to keep up and calls will queue up latency and affect your customer experience.

If this sounds like you, it's probably also true that you don't have time to consider building production infrastructure in-house. You need inference hosting ASAP that is reliable, easy to implement, and can scale based on demand without affecting your customer experience.

This is one of the core advantages of serverless GPUs. Practically unlimited scale bi-directionally, based on usage demand. And if you need this solution to be easy to implement and headache free, partner with a provider like Banana. It can be a fast-track to cutting-edge production infrastructure.

4. Current GPU hosting provider sucks to deal with (AWS, GCP)

This is surprisingly a real complaint we see from customers that move to Banana. For example, they might be trying to increase their GPU quota and never hear back from their hosting provider. Or in some cases simple customer support tickets take multiple weeks to respond to. In short, to their hosting provider they are a small fish in a big pond and are cast aside.

The annoying part about this pain point is if you are an ML team with a rapidly growing product that demands GPU quota increases or some degree of customer support, this issue most likely affects your customer's experience.

5. Free up engineering resources to focus on product

Your engineering team's highest value is working on your product, not hosting infrastructure. Rather than re-invent the wheel and try to build model hosting infra in-house, partner with a hosting solution that handles the dirty work for you.

If you are curious on how intensive the engineering resources would be to build this infrastructure in-house, we wrote an article detailing how to scale production infrastructure to 1M+ users and touch on this topic. To put it simply, you are looking at multiple months of dedicated engineer time building infrastructure in-house compared to less than 7 days of implementation time if you went with a serverless product like Banana.